TIME Dec 15, Noon

LOCATION SuperNova Kino, room 406, 4th floor, Narva mnt 27

An inspiring EEVR community event organised by MEDIT, including presentations, vivid discussions, technical and artistic demos with highlights by visiting Finnish media artist Hanna Haaslahti (middle) and producer Marko Tandefelt (right).

Announcement by Madis Vasser:

EEVR #21 will once again find itself in familiar territory on the fourth floor of the BFM school in Tallinn, but this time around our host is MEDIT – TLU Center of Excellence in Media Innovation and Digital Culture. We’ll be mixing film, photogrammetry, and some very interesting hardware. Everyone interested in VR/AR are very welcome! The event is free, but do click the attend button early if you plan to show up! Go to FB.

On the schedule:

* Hanna Haaslahti (http://www.hannahaaslahti.net/) – some cool photogrammetry projects

* Madis Krisman & Johannes Kruusma (Avar.ee) – some more cool photogrammetry projects

* Rein Zobel (MaruVR.ee) – VR Days 2018 recap etc

Demos:

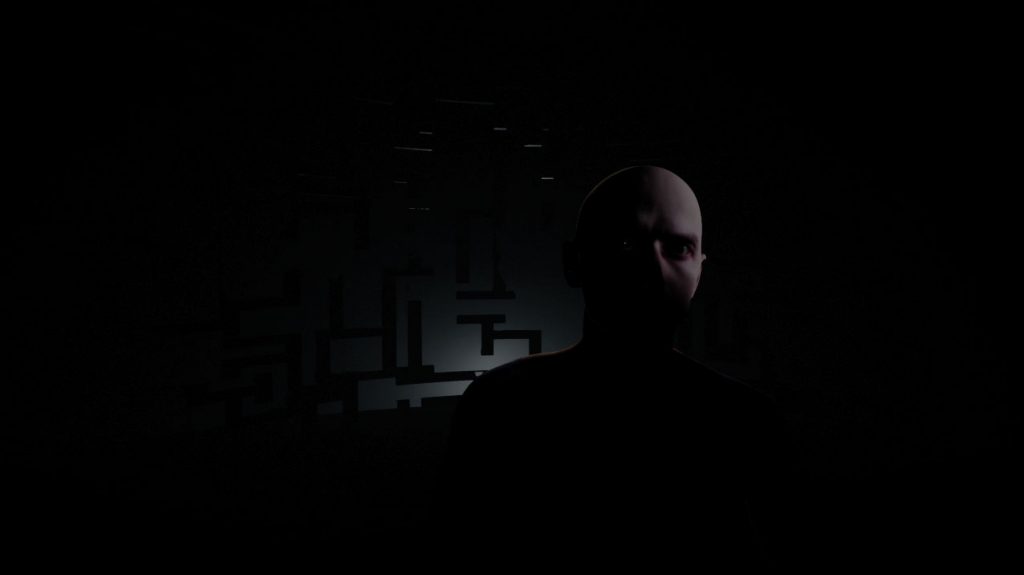

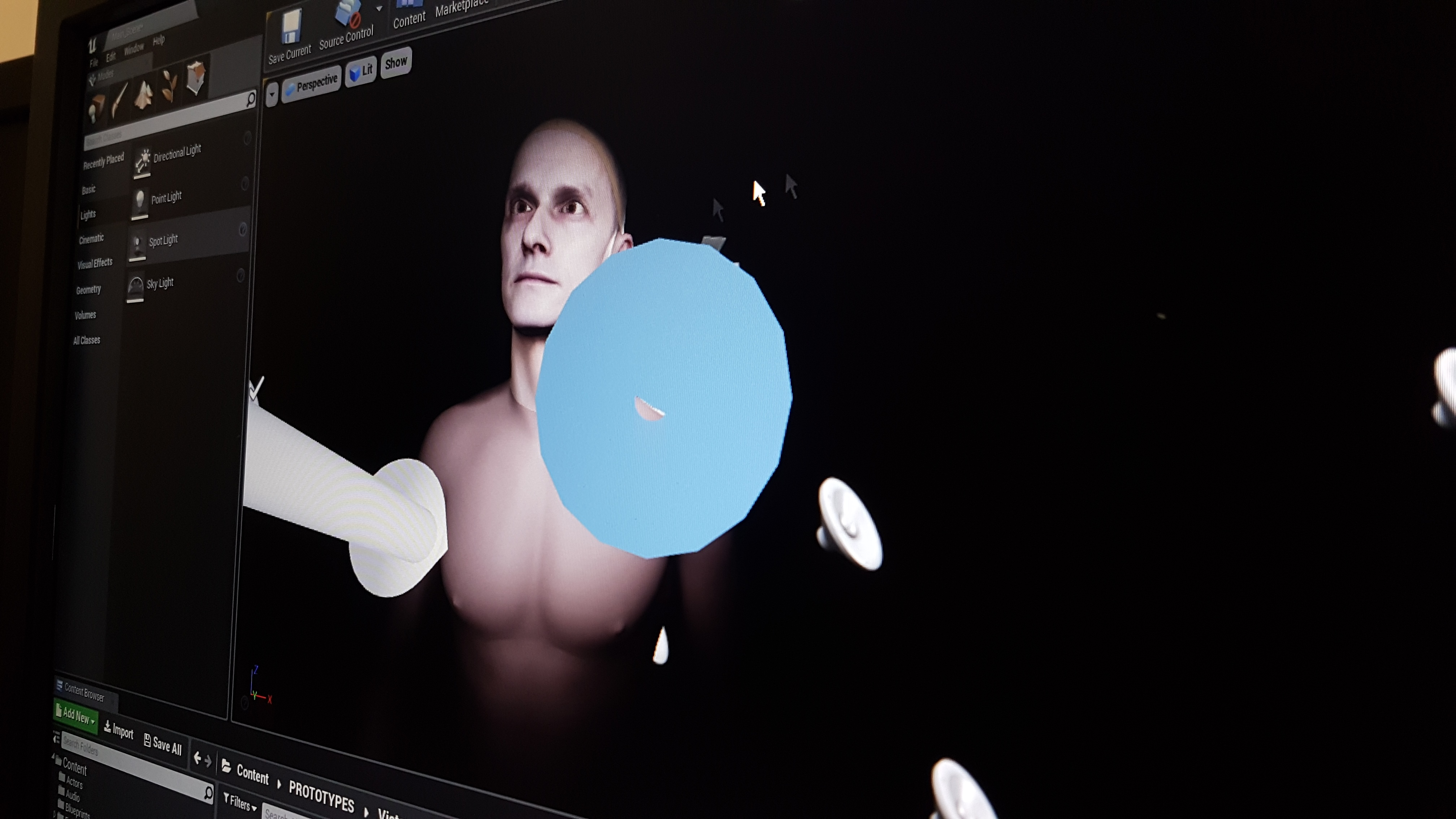

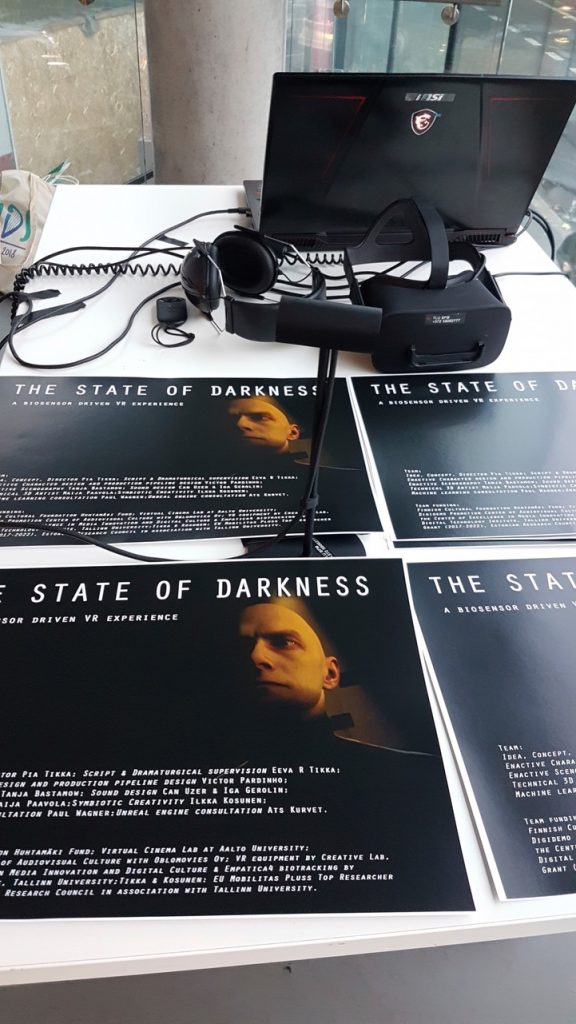

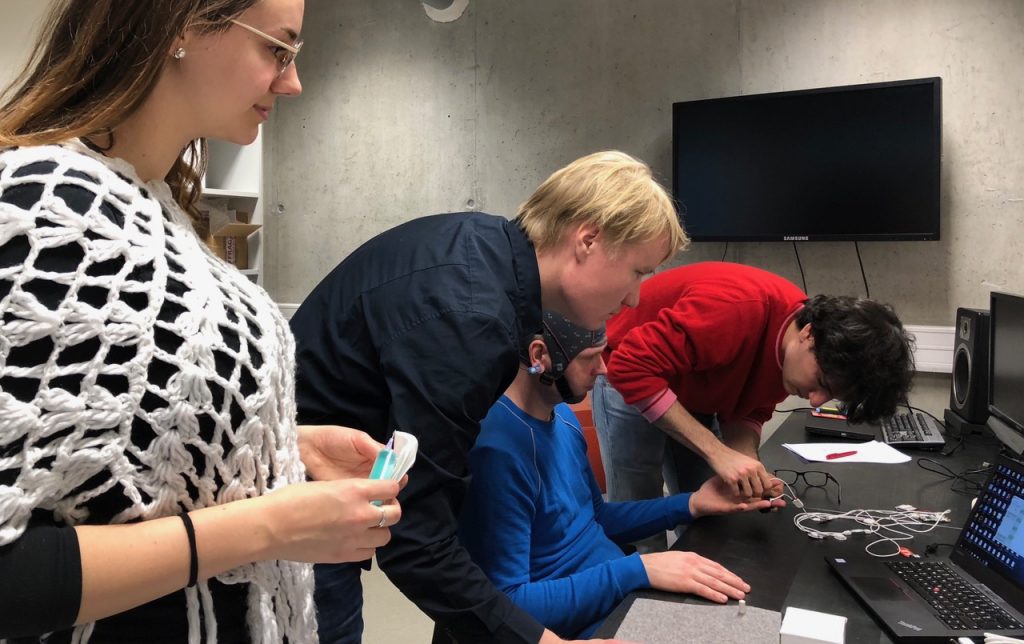

* State of Darkness VR – Enactive Virtuality Research Group

* Magic Leap (curtesy of https://www.operose.io/)

* “Hands-on” with some prototype hardware (top secret)

Highlighting:

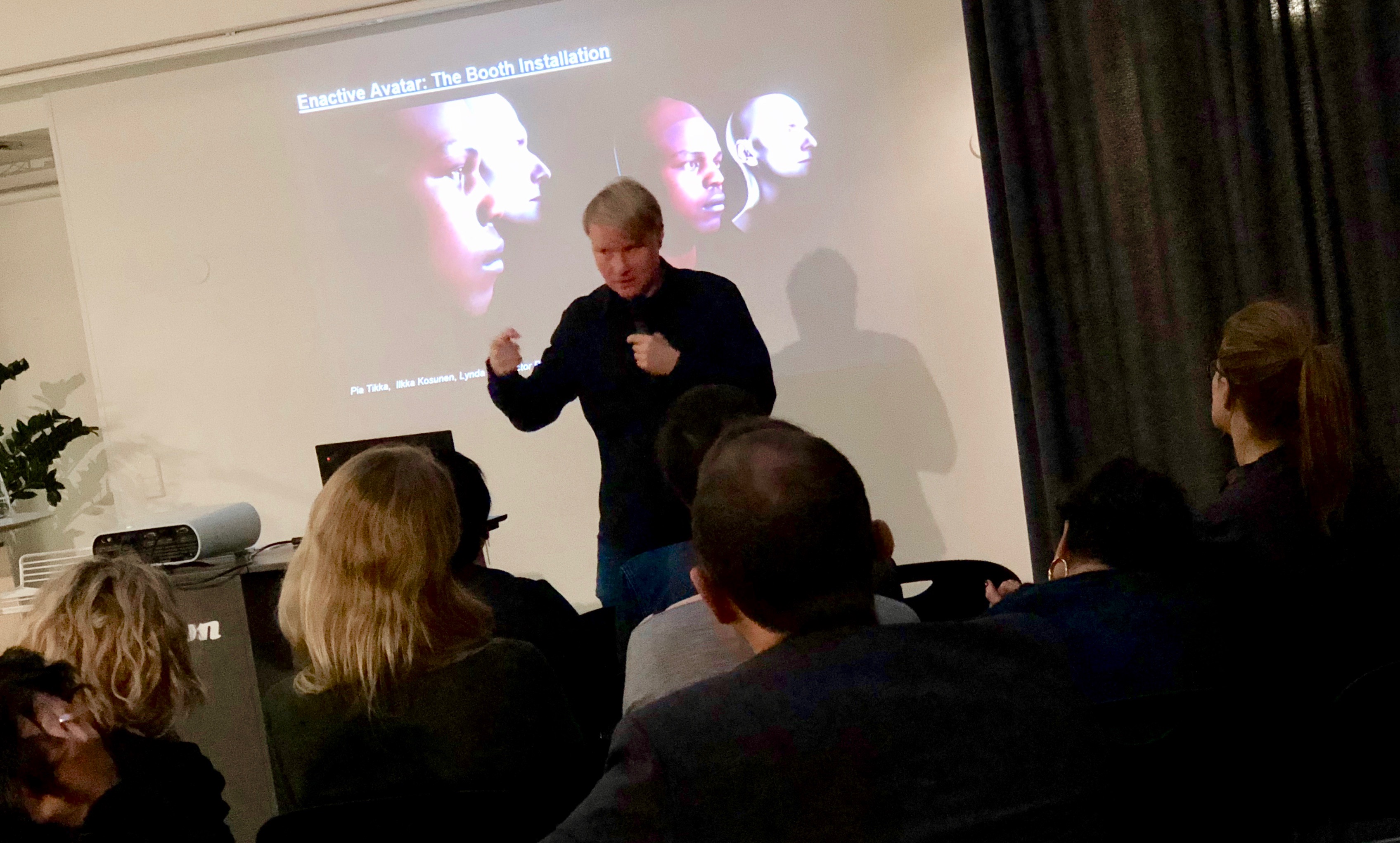

CAPTURED

Captured is a narrative simulation about social injustice where your digital double has a role to play. In the installation, people are captured as 3D Avatars who become actors in a scenario where individual freedom is taken over by collective instincts.

Team

Hanna Haaslahti is a Finnish media artist working with ideas from technological theater, expanded image and interaction. She holds MFA from Medialab in University of Arts and Design Helsinki (Aalto). Currently Hanna Haaslahti lives and works in Helsinki. She has been artist-in-residence at MagicMediaLab, Brussels (2000), Nifca NewMediaAir, St.Petersburg (2003), Cité International des Arts, Paris (2008) and SculptureShock organized by Royal British Society of Sculptors, London (2015). She has received honorary mention at Vida 6.0 Art and artificial life-competition (2003) and was selected in 50 best category in ZKM Medien Kunst Preis (2003). She has received the most prestigious Finnish media art award, AVEK-award (2005).

Marko Tandefelt is a Helsinki based concept designer, educator and musician with extensive experience in art, design, media and technology fields. Among his interests are: Concept design, sensorbased interface prototyping, immersive multisensory cinema, and experimental visualization systems.

Marko has lived in New York for 20+ years, working at companies such as NEC R&D Labs, ESIDesign, Antennadesig and the Finnish Cultural Institute. During 2007-2015 Marko worked as the Director of Technology & Research/Senior Technology Manager at Eyebeam Art & Technology Center. Marko taught Masters Thesis courses at Parsons School of Design MFADT program in New York from 2001 til 2016.

In his native Helsinki Finland, Marko has worked since 2016 as a Technology Consultant and Producer in various interactive projects, including Hanna Haaslahti’s realtime 3D Body scanning installation system “Captured”. Marko works currently at Kunstventures as a media art producer, concept designer and prototyper.

Marko holds a B.M. degree Summa Cum Laude in Music Technology from NYU, and a Master’s degree from NYU Tisch School of the Arts Film & TV School Interactive Telecommunications Program ITP. He is a longtime member of ACM, AES, IEEE, SIGGRAPH, and SMPTE, and has worked as a paper reader and jury member for SIGGRAPH and ACE conferences.